Combining multiscale processing and cross-head knowledge distillation to detect small UAVs in challenging environments using a YOLOv8-based architecture.

The proliferation of small Unmanned Aerial Vehicles (UAVs) poses significant security challenges to critical infrastructure. Detecting these small targets in real-time on edge devices is difficult due to their low radar cross-sections and complex environmental backgrounds. This report presents a computer vision system designed to address these challenges using a YOLOv8-based architecture. The system is enhanced by two key strategies: (1) Multiscale Processing using a 5-crop inference mechanism with Non-Maximum Weighted (NMW) fusion to simulate a zoom effect without interpolation loss; and (2) Cross-Head Knowledge Distillation (CrossKD) combined with Progressive KD to efficiently transfer detection-sensitive features from a large Teacher model to a lightweight Student model.

As commercial drones become more accessible, the risk of unauthorized surveillance and airspace intrusion increases.

Drones often occupy a tiny fraction of the frame (<5%), and standard resizing in object detectors causes significant feature loss.

High-accuracy models (e.g., YOLOv8-X) are too heavy for edge devices, requiring the speed of 'Nano' models with the accuracy of 'Large' models.

The system must generalize across diverse environments including clear sky, fog, night, and various weather conditions.

Our approach combines two powerful strategies to achieve high accuracy with real-time performance.

To resolve the small object issue, we implemented a grid cropping strategy during inference. Instead of resizing the entire large image down to the model input size (which destroys small details), we process the image in segments.

Merging results using standard NMS proved too aggressive. We adopted Non-Maximum Weighted (NMW) to calculate weighted average of box coordinates based on confidence scores.

Note: Using only the 5 crops (discarding the full-frame original image) yielded the best F1-score and AP, as the original resized frame often introduced false positives.

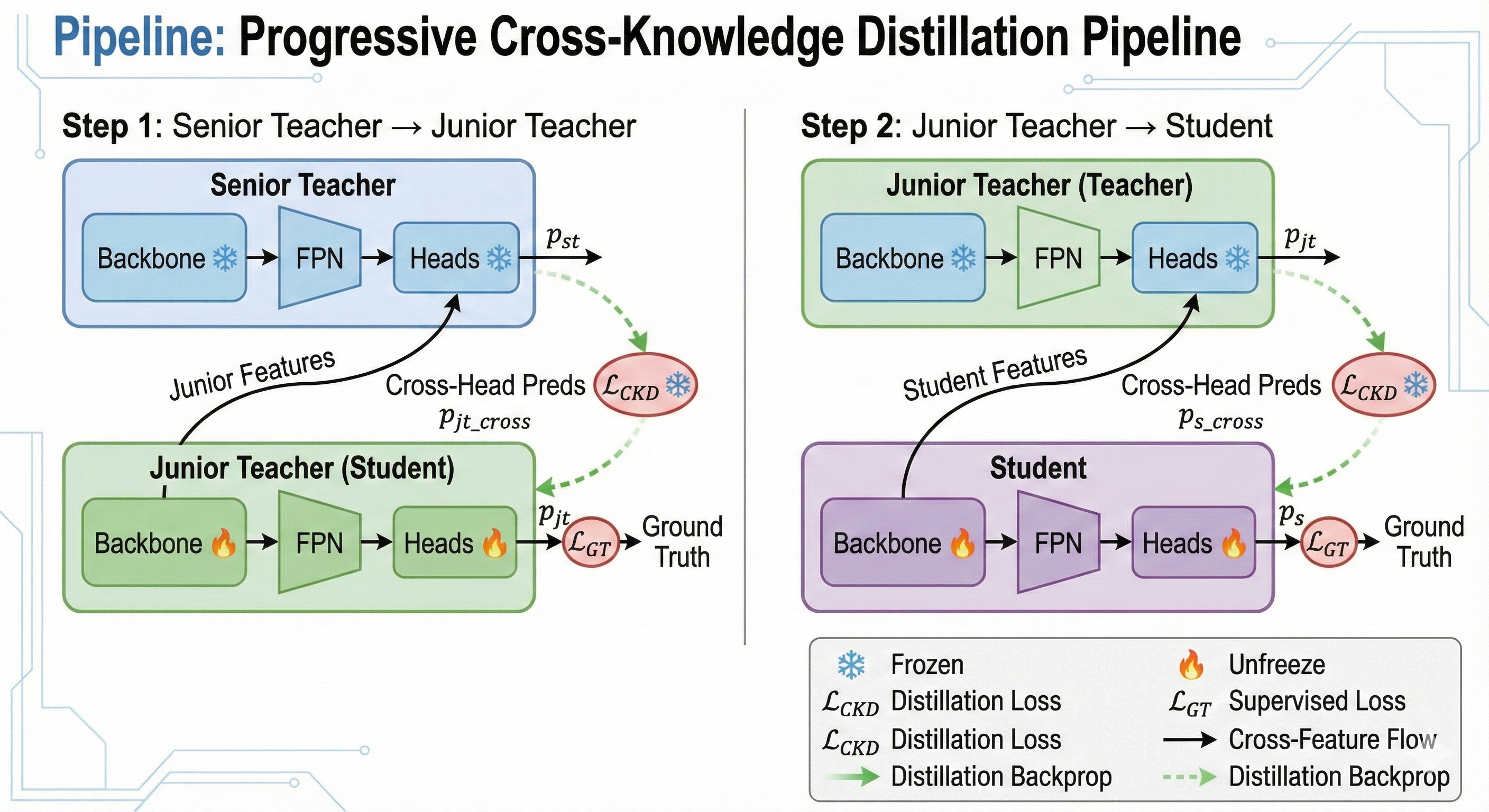

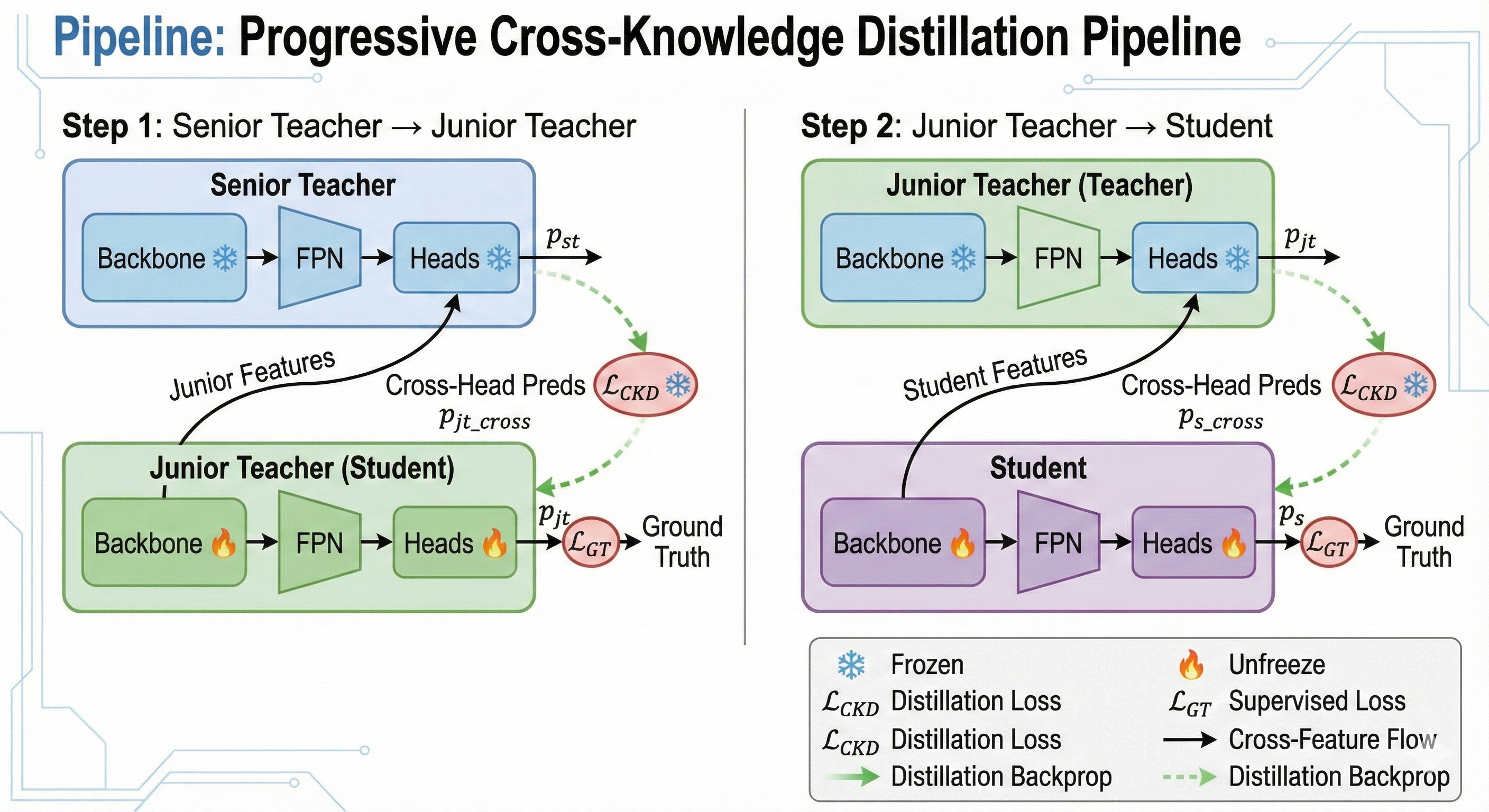

To achieve low latency, we distilled knowledge from a heavy Teacher model to a lightweight Student model (YOLOv8-Nano) using Cross-Head Knowledge Distillation (CrossKD).

Directly distilling from a massive model to a tiny one often causes "Knowledge Shock" due to the capacity gap. We employed a progressive pipeline:

Instead of traditional feature mimicking, CrossKD establishes cross-connections between Teacher and Student detection heads:

By interacting directly at the head level, the model learns better bounding box regression and focuses on detection-sensitive features rather than background noise.

Overview of our training and inference architecture

Evaluated on VIP Cup 2025 and DUT Anti-UAV datasets

| Configuration | mAP@0.5 | mAP@0.5-0.9 | FPS |

|---|---|---|---|

| YOLOv11-Nano | 0.51 | 0.23 | 72 |

| YOLOv12-Nano | 0.55 | 0.22 | 69 |

| YOLOv8-Nano (Baseline) | 0.55 | 0.22 | 77 |

| YOLOv8-Nano (KD) | 0.65 | 0.25 | 77 |

| YOLOv8-Nano (Pretrained) | 0.79 | 0.48 | 77 |

| YOLOv8-Nano (Multiscale) | 0.61 | 0.23 | 28 |

| YOLOv8-Nano (KD + Pretrained + Multiscale) | 0.84 | 0.51 | 28 |

Experiments on the cropping strategy showed that including the original full-frame image often degraded precision.

We distilled knowledge from YOLOv8-X to YOLOv8-Nano via YOLOv8-Large as an intermediate using Cross-Head Knowledge Distillation (CrossKD). This approach improved mAP by +10% by focusing on detection-sensitive features and improving localization.

Visual comparison of our detection system on RGB and IR (Infrared) videos

This project developed a UAV detection system that balances accuracy and speed. The multiscale processing strategy handles small objects by simulating a zoom effect, while the cross-head knowledge distillation combined with progressive KD enables efficient knowledge transfer with enhanced localization capabilities.

mAP improved from 0.55 to 0.84

28 FPS on edge devices

Improved bounding box regression via CrossKD

Research papers that informed our approach